2. Advanced K3s Configuration - Customizing Your Local Cluster

Tech enthusiast, DevOps learner. Arch Linux w/ KDE. Rust learner. Harmonium player. Sudoku solver. Passionate about music and technology.

K3s makes Kubernetes more accessible, but its real strength lies in its flexibility. While the default setup is great for getting started, advanced configurations allow you to fine-tune your cluster to meet specific project needs. From disabling unneeded components to scaling with worker nodes, K3s offers plenty of options for customization. In this guide, we’ll explore advanced configuration techniques to make the most out of your K3s setup.

Understanding K3s Configuration Options

K3s provides various ways to customize your cluster during installation. One key tool for this is the environment variable INSTALL_K3S_EXEC. This variable allows you to pass additional flags to the K3s installer, tailoring the setup process to suit your requirements.

Key Configuration Flags

--disable=<component>: Disable unnecessary default components (e.g., Traefik, metrics-server).--data-dir=<path>: Specify a custom directory for storing K3s data.--cluster-init: Set up a server node as the initial control plane in a multi-node cluster.

These options help streamline the installation and optimize your cluster for specific use cases.

Disabling Unnecessary Features

By default, K3s includes some built-in components like Traefik (a lightweight ingress controller). However, if your project has specific requirements (e.g., using another ingress controller), you can disable these features during installation.

Example: Disabling Traefik

To disable Traefik, run the following command:

curl -sfL https://get.k3s.io | INSTALL_K3S_EXEC="--disable=traefik" sh -

This ensures Traefik is not installed as part of your K3s cluster. You can also disable multiple components by separating them with commas:

curl -sfL https://get.k3s.io | INSTALL_K3S_EXEC="--disable=traefik,metrics-server" sh -

This flexibility allows you to replace K3s defaults with custom solutions, like NGINX for ingress or Prometheus for metrics.

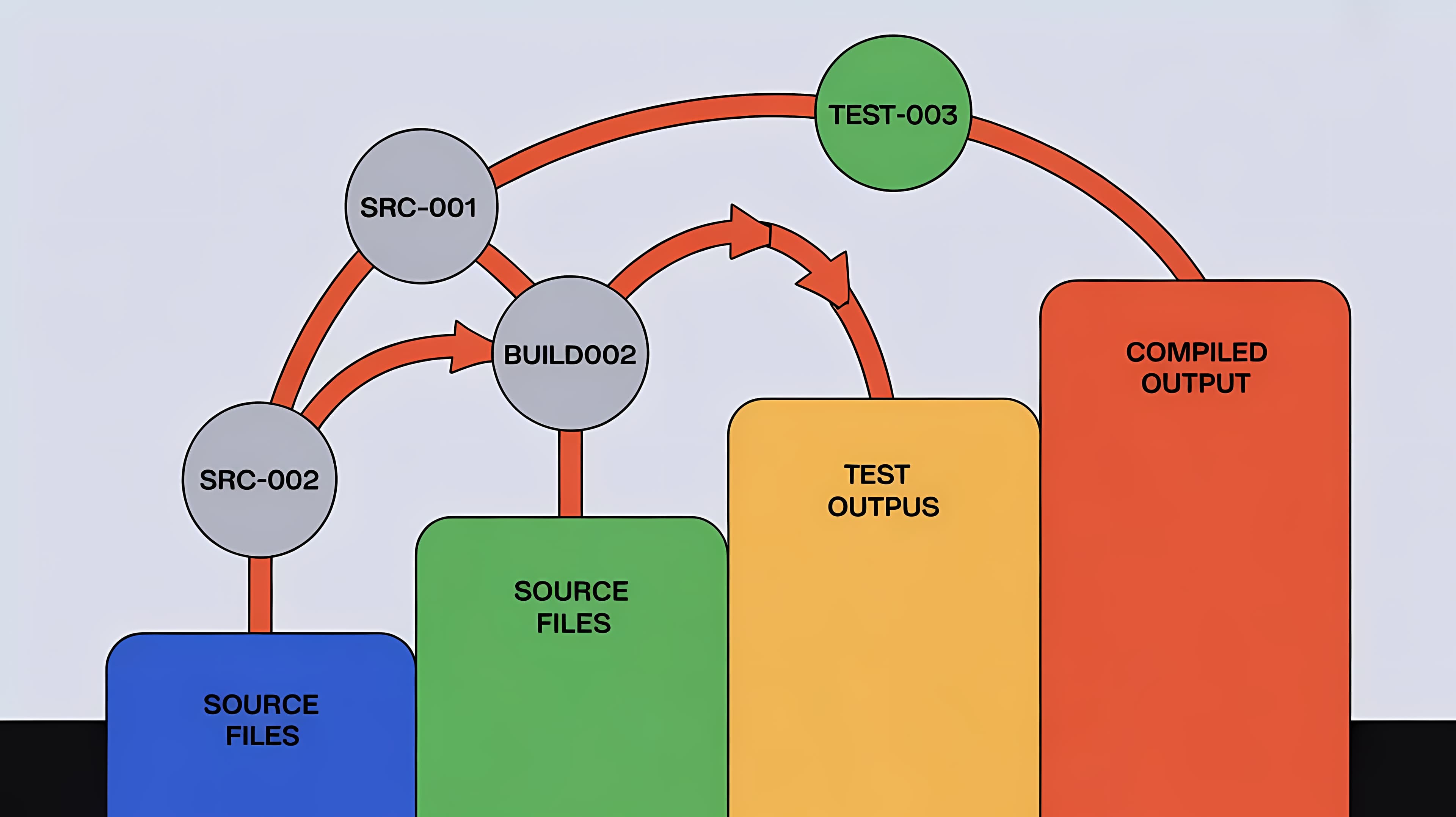

Adding Worker Nodes

Scaling your cluster with additional worker nodes is straightforward in K3s.

Step 1: Retrieve the Node Token

On the control plane node, the token required to join worker nodes is stored at /var/lib/rancher/k3s/server/node-token. Retrieve it using the following command:

cat /var/lib/rancher/k3s/server/node-token

Step 2: Add a Worker Node

Run the following command on the worker node, replacing <IP> with the control plane node’s IP address and <TOKEN> with the retrieved node token:

curl -sfL https://get.k3s.io | K3S_URL=https://<IP>:6443 K3S_TOKEN=<TOKEN> sh -

Once the installation is complete, the worker node will automatically join the cluster.

Step 3: Verify the Node Addition

On the control plane node, check the cluster’s nodes:

kubectl get nodes

You should see the worker node listed along with the control plane node(s).

Use Cases for Advanced Configurations

Resource-Constrained Environments

If you’re working in an environment with limited resources (e.g., Raspberry Pi or a VM), disabling unnecessary components can free up memory and CPU.

Example:

curl -sfL https://get.k3s.io | INSTALL_K3S_EXEC="--disable=traefik,local-storage" sh -

Custom Ingress Controller

If your project requires an ingress controller other than Traefik (e.g., NGINX or HAProxy), disable Traefik and deploy your preferred solution.

Multi-Node Clusters

For testing or distributed applications, adding worker nodes can simulate a production-like cluster on local hardware or VMs.

Example:

Control plane:

curl -sfL https://get.k3s.io | INSTALL_K3S_EXEC="--cluster-init" sh -Workers:

curl -sfL https://get.k3s.io | K3S_URL=https://<control-plane-ip>:6443 K3S_TOKEN=<token> sh -

Conclusion

K3s is not only lightweight and easy to set up but also highly customizable. By using advanced configuration options, you can tailor your local Kubernetes cluster to meet the unique demands of your projects, whether it’s for resource-constrained environments, custom workloads, or multi-node setups.

Next Steps

Explore other configuration flags in the K3s documentation.

Experiment with deploying and benchmarking your applications on different configurations.

Try integrating tools like Helm or CI/CD pipelines with your customized K3s setup.

Got specific use cases or challenges with K3s? Share them below, and let’s discuss how to optimize your cluster further!